Ace Your Associate Cloud Engineer with Practice Exams.

Google Cloud Certified – Associate Cloud Engineer Practice Exam (Q 50)

QUESTION 1

You need to create an autoscaling managed instance group for an HTTPS web application.

You want to make sure that unhealthy VMs are recreated.

What should you do?

- A. Create a health check on port 443 and use that when creating the Managed Instance Group.

- B. Select Multi-Zone instead of Single-Zone when creating the Managed Instance Group.

- C. In the Instance Template, add the label health-check.

- D. In the Instance Template, add a startup script that sends a heartbeat to the metadata server.

Correct Answer: A

QUESTION 2

Your company has a Google Cloud Platform project that uses BigQuery for data warehousing.

Your data science team changes frequently and has few members. You need to allow members of this team to perform queries. You want to follow Google-recommended practices.

What should you do?

- A.

- 1. Create an IAM entry for each data scientist’s user account.

- 2. Assign the BigQuery jobUser role to the group.

- B.

- 1. Create an IAM entry for each data scientist’s user account.

- 2. Assign the BigQuery dataViewer user role to the group.

- C.

- 1. Create a dedicated Google group in Cloud Identity.

- 2. Add each data scientist’s user account to the group.

- 3. Assign the BigQuery jobUser role to the group.

- D.

- 1. Create a dedicated Google group in Cloud Identity.

- 2. Add each data scientist’s user account to the group.

- 3. Assign the BigQuery dataViewer user role to the group.

Correct Answer: C

Reference contents:

– #BigQuery predefined IAM roles – Access control with IAM | BigQuery | Google Cloud

QUESTION 3

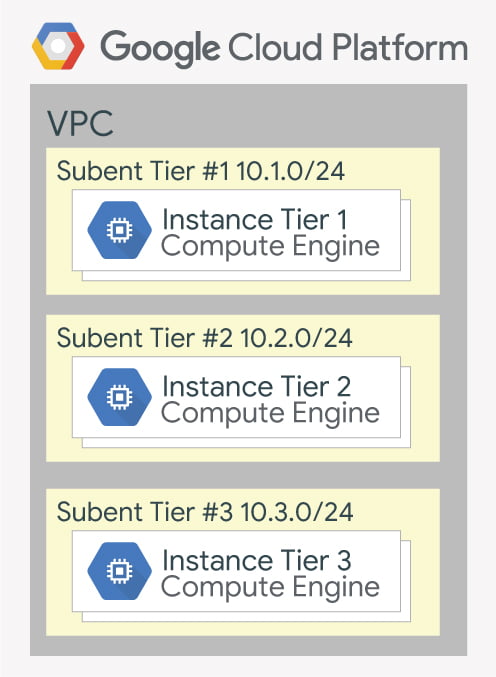

Your company has a 3-tier solution running on Google Compute Engine.

The configuration of the current infrastructure is shown below.

Each tier has a service account that is associated with all instances within it. You need to enable communication on TCP port 8080 between tiers as follows:

– Instances in tier #1 must communicate with tier #2.

– Instances in tier #2 must communicate with tier #3.

What should you do?

- A.

- 1. Create an ingress firewall rule with the following settings: Targets: all instances Source filter: IP ranges (with the range set to 10.0.2.0/24) Protocols: allow all

- 2. Create an ingress firewall rule with the following settings: Targets: all instances Source filter: IP ranges (with the range set to 10.0.1.0/24) Protocols: allow all

- B.

- 1. Create an ingress firewall rule with the following settings: Targets: all instances with tier #2 service account Source filter: all instances with tier #1 service account Protocols: allow TCP:8080

- 2. Create an ingress firewall rule with the following settings: Targets: all instances with tier #3 service account Source filter: all instances with tier #2 service account Protocols: allow TCP: 8080

- C.

- 1. Create an ingress firewall rule with the following settings: Targets: all instances with tier #2 service account Source filter: all instances with tier #1 service account Protocols: allow all

- 2. Create an ingress firewall rule with the following settings: Targets: all instances with tier #3 service account Source filter: all instances with tier #2 service account Protocols: allow all

- D.

- 1. Create an egress firewall rule with the following settings: Targets: all instances Source filter: IP ranges (with the range set to 10.0.2.0/24) Protocols: allow TCP: 8080

- 2. Create an egress firewall rule with the following settings: Targets: all instances Source filter: IP ranges (with the range set to 10.0.1.0/24) Protocols: allow TCP: 8080

Correct Answer: B

QUESTION 4

You are given a project with a single Virtual Private Cloud (VPC) and a single subnetwork in the us-central1 region.

There is a Google Compute Engine instance hosting an application in this subnetwork. You need to deploy a new instance in the same project in the europe-west1 region. This new instance needs access to the application. You want to follow Google-recommended practices.

What should you do?

- A.

- 1. Create a subnetwork in the same VPC, in europe-west1.

- 2. Create the new instance in the new subnetwork and use the first instance’s private address as the endpoint.

- B.

- 1. Create a VPC and a subnetwork in europe-west1.

- 2. Expose the application with an internal load balancer.

- 3. Create the new instance in the new subnetwork and use the load balancer’s address as the endpoint.

- C.

- 1. Create a subnetwork in the same VPC, in europe-west1.

- 2. Use Cloud VPN to connect the two subnetworks.

- 3. Create the new instance in the new subnetwork and use the first instance’s private address as the endpoint.

- D.

- 1. Create a VPC and a subnetwork in europe-west1.

- 2. Peer the 2 VPCs.

- 3. Create the new instance in the new subnetwork and use the first instance’s private address as the endpoint.

Correct Answer: A

QUESTION 5

Your projects incurred more costs than you expected last month.

Your research reveals that a development GKE container emitted a huge number of logs, which resulted in higher costs. You want to disable the logs quickly using the minimum number of steps.

What should you do?

- A.

- 1. Go to the Logs ingestion window in Stackdriver Logging, and disable the log source for the GKE container resource.

- B.

- 1. Go to the Logs ingestion window in Stackdriver Logging, and disable the log source for the GKE Cluster Operations resource.

- C.

- 1. Go to the GKE console, and delete existing clusters.

- 2. Recreate a new cluster.

- 3. Clear the option to enable legacy Stackdriver Logging.

- D.

- 1. Go to the GKE console, and delete existing clusters.

- 2. Recreate a new cluster.

- 3. Clear the option to enable legacy Stackdriver Monitoring.

Correct Answer: A

Reference contents:

– Monitored resources and services | Cloud Logging

QUESTION 6

You have a web-site hosted on Google App Engine standard environment.

You want 1% of your users to see a new test version of the web-site. You want to minimize complexity.

What should you do?

- A. Deploy the new version in the same application and use the –migrate option.

- B. Deploy the new version in the same application and use the –splits option to give a weight of 99 to the current version and a weight of 1 to the new version.

- C. Create a new Google App Engine application in the same project. Deploy the new version in that application. Use the Google App Engine library to proxy 1% of the requests to the new version.

- D. Create a new Google App Engine application in the same project. Deploy the new version in that application. Configure your network load balancer to send 1% of the traffic to that new application.

Correct Answer: C

Reference contents:

– Configuring TCP/UDP load balancing | Kubernetes Engine Documentation | Google Cloud

QUESTION 7

You have a web application deployed as a managed instance group.

You have a new version of the application to gradually deploy. Your web application is currently receiving live web traffic. You want to ensure that the available capacity does not decrease during the deployment.

What should you do?

- A. Perform a rolling-action start-update with maxSurge set to 0 and maxUnavailable set to 1.

- B. Perform a rolling-action start-update with maxSurge set to 1 and maxUnavailable set to 0.

- C. Create a new managed instance group with an updated instance template. Add the group to the backend service for the load balancer. When all instances in the new managed instance group are healthy, delete the old managed instance group.

- D. Create a new instance template with the new application version. Update the existing managed instance group with the new instance template. Delete the instances in the managed instance group to allow the managed instance group to recreate the instance using the new instance template.

Correct Answer: B

Reference contents:

– #Maximum unavailable – Automatically apply VM configuration updates in a MIG | Compute Engine Documentation | Google Cloud

QUESTION 8

You are building an application that stores relational data from users.

Users across the globe will use this application. Your CTO is concerned about the scaling requirements because the size of the user base is unknown. You need to implement a database solution that can scale with your user growth with minimum configuration changes.

Which storage solution should you use?

- A. Google Cloud SQL

- B. Google Cloud Spanner

- C. Google Cloud Firestore

- D. Google Cloud Datastore

Correct Answer: B

QUESTION 9

You are the organization and billing administrator for your company.

The engineering team has the Project Creator role in the organization. You do not want the engineering team to be able to link projects to the billing account. Only the finance team should be able to link a project to a billing account, but they should not be able to make any other changes to projects.

What should you do?

- A. Assign the finance team only the Billing Account User role on the billing account.

- B. Assign the engineering team only the Billing Account User role on the billing account.

- C. Assign the finance team the Billing Account User role on the billing account and the Project Billing Manager role on the organization.

- D. Assign the engineering team the Billing Account User role on the billing account and the Project Billing Manager role on the organization.

Correct Answer: C

Reference contents:

– Overview of Cloud Billing access control

QUESTION 10

You have an application running in Google Kubernetes Engine (GKE) with cluster autoscaling enabled.

The application exposes a TCP endpoint. There are several replicas of this application. You have a Google Compute Engine instance in the same region, but in another Virtual Private Cloud (VPC), called gce-network, that has no overlapping IP ranges with the first VPC. This instance needs to connect to the application on GKE. You want to minimize effort.

What should you do?

- A.

- 1. In GKE, create a Service of type LoadBalancer that uses the application’s Pods as backend.

- 2. Set the service’s externalTrafficPolicy to Cluster.

- 3. Configure the Google Compute Engine instance to use the address of the load balancer that has been created.

- B.

- 1. In GKE, create a Service of type NodePort that uses the application’s Pods as backend.

- 2. Create a Google Compute Engine instance called proxy with 2 network interfaces, one in each VPC.

- 3. Use iptables on this instance to forward traffic from gce-network to the GKE nodes.

- 4. Configure the Google Compute Engine instance to use the address of proxy in gce-network as endpoint.

- C.

- 1. In GKE, create a Service of type LoadBalancer that uses the application’s Pods as backend.

- 2. Add an annotation to this service: cloud.google.com/load-balancer-type: Internal.

- 3. Peer the two VPCs together.

- 4. Configure the Google Compute Engine instance to use the address of the load balancer that has been created.

- D.

- 1. In GKE, create a Service of type LoadBalancer that uses the application’s Pods as backend.

- 2. Add a Google Cloud Armor Security Policy to the load balancer that whitelists the internal IPs of the MIG’s instances.

- 3. Configure the Google Compute Engine instance to use the address of the load balancer that has been created.

Correct Answer: C

Reference contents:

– Using an internal TCP/UDP load balancer | Kubernetes Engine Documentation | Google Cloud

– Configuring TCP/UDP load balancing | Kubernetes Engine Documentation | Google Cloud

QUESTION 11

Your organization is a financial company that needs to store audit log files for 3 years.

Your organization has hundreds of Google Cloud projects. You need to implement a cost-effective approach for log file retention.

What should you do?

- A. Create an export to the sink that saves logs from Cloud Audit to BigQuery.

- B. Create an export to the sink that saves logs from Cloud Audit to a Coldline Storage bucket.

- C. Write a custom script that uses logging API to copy the logs from Stackdriver logs to BigQuery.

- D. Export these logs to Google Cloud Pub/Sub and write a Google Cloud Dataflow pipeline to store logs to Google Cloud SQL.

Correct Answer: B

Reference contents:

– Optimize storage in BigQuery | Google Cloud

– #Supported destinations – Routing and storage overview | Cloud Logging

QUESTION 12

You want to run a single caching HTTP reverse proxy on GCP for a latency-sensitive web-site.

This specific reverse proxy consumes almost no CPU. You want to have a 30-GB in-memory cache, and need an additional 2 GB of memory for the rest of the processes.

You want to minimize cost. How should you run this reverse proxy?

- A. Create a Cloud Memorystore for Redis instance with 32-GB capacity.

- B. Run it on Google Compute Engine, and choose a custom instance type with 6 vCPUs and 32 GB of memory.

- C. Package it in a container image, and run it on Google Kubernetes Engine, using n1-standard-32 instances as nodes.

- D. Run it on Google Compute Engine, choose the instance type n1-standard-1, and add an SSD persistent disk of 32 GB.

Correct Answer: A

QUESTION 13

You are hosting an application on bare-metal servers in your own data center.

The application needs access to Google Cloud Storage. However, security policies prevent the servers hosting the application from having public IP addresses or access to the internet. You want to follow Google-recommended practices to provide the application with access to Google Cloud Storage.

What should you do?

- A.

- 1. Use nslookup to get the IP address for storage.googleapis.com.

- 2. Negotiate with the security team to be able to give a public IP address to the servers.

- 3. Only allow egress traffic from those servers to the IP addresses for storage.googleapis.com.

- B.

- 1. Using Cloud VPN, create a VPN tunnel to a Virtual Private Cloud (VPC) in Google Cloud.

- 2. In this VPC, create a Google Compute Engine instance and install the Squid proxy server on this instance.

- 3. Configure your servers to use that instance as a proxy to access Google Cloud Storage.

- C.

- 1. Use Migrate for Google Compute Engine (formerly known as Velostrata) to migrate those servers to Google Compute Engine.

- 2. Create an internal load balancer (ILB) that uses storage.googleapis.com as backend.

- 3. Configure your new instances to use this ILB as proxy.

- D.

- 1. Using Cloud VPN or Interconnect, create a tunnel to a VPC in Google Cloud.

- 2. Use Cloud Router to create a custom route advertisement for 199.36.153.4/30. Announce that network to your on-premises network through the VPN tunnel.

- 3. In your on-premises network, configure your DNS server to resolve *.googleapis.com as a CNAME to restricted.googleapis.com.

Correct Answer: D

Reference contents:

– Configuring Private Google Access for on-premises hosts | VPC

QUESTION 14

You want to deploy an application on Google Cloud Run that processes messages from a Google Cloud Pub/Sub topic.

You want to follow Google-recommended practices.

What should you do?

- A.

- 1. Create a Google Cloud Functions that uses a Google Cloud Pub/Sub trigger on that topic.

- 2. Call your application on Google Cloud Run from the Google Cloud Functions for every message.

- B.

- 1. Grant the Pub/Sub Subscriber role to the service account used by Google Cloud Run.

- 2. Create a Google Cloud Pub/Sub subscription for that topic.

- 3. Make your application pull messages from that subscription.

- C.

- 1. Create a service account.

- 2. Give the Google Cloud Run Invoker role to that service account for your Google Cloud Run application.

- 3. Create a Google Cloud Pub/Sub subscription that uses that service account and uses your Google Cloud Run application as the push endpoint.

- D.

- 1. Deploy your application on Google Cloud Run on GKE with the connectivity set to Internal.

- 2. Create a Google Cloud Pub/Sub subscription for that topic.

- 3. In the same Google Kubernetes Engine cluster as your application, deploy a container that takes the messages and sends them to your application.

Correct Answer: C

Reference contents:

– #Integrating with Pub/Sub – Using Pub/Sub with Cloud Run tutorial

QUESTION 15

You need to deploy an application, which is packaged in a container image, in a new project.

The application exposes an HTTP endpoint and receives very few requests per day. You want to minimize costs.

What should you do?

- A. Deploy the container on Google Cloud Run.

- B. Deploy the container on Google Cloud Run on GKE.

- C. Deploy the container on Google App Engine Flexible.

- D. Deploy the container on GKE with cluster autoscaling and horizontal pod autoscaling enabled.

Correct Answer: A

QUESTION 16

Your company has an existing GCP organization with hundreds of projects and a billing account.

Your company recently acquired another company that also has hundreds of projects and its own billing account. You would like to consolidate all GCP costs of both GCP organizations onto a single invoice. You would like to consolidate all costs as of tomorrow.

What should you do?

- A. Link the acquired company’s projects to your company’s billing account.

- B. Configure the acquired company’s billing account and your company’s billing account to export the billing data into the same BigQuery dataset.

- C. Migrate the acquired company’s projects into your company’s GCP organization. Link the migrated projects to your company’s billing account.

- D. Create a new GCP organization and a new billing account. Migrate the acquired company’s projects and your company’s projects into the new GCP organization and link the projects to the new billing account.

Correct Answer: A

Reference contents:

– Google Cloud Platform Cross Org Billing | by Ferris Argyle | Medium

QUESTION 17

You built an application on Google Cloud that uses Google Cloud Spanner.

Your support team needs to monitor the environment but should not have access to table data. You need a streamlined solution to grant the correct permissions to your support team, and you want to follow Google-recommended practices.

What should you do?

- A. Add the support team group to the roles/monitoring.viewer role.

- B. Add the support team group to the roles/spanner.databaseUser role.

- C. Add the support team group to the roles/spanner.databaseReader role.

- D. Add the support team group to the roles/stackdriver.accounts.viewer role.

Correct Answer: A

Reference contents:

– Understanding roles | IAM Documentation | Google Cloud

QUESTION 18

For analysis purposes, you need to send all the logs from all of your Google Compute Engine instances to a BigQuery dataset called platform-logs.

You have already installed the Google Cloud Logging agent on all the instances. You want to minimize cost.

What should you do?

- A.

- 1. Give the BigQuery Data Editor role on the platform-logs dataset to the service accounts used by your instances.

- 2. Update your instances metadata to add the following value: logs-destination: bq://platform-logs.

- B.

- 1. In Google Cloud Logging, create a logs export with a Google Cloud Pub/Sub topic called logs as a sink.

- 2. Create a Google Cloud Functions that is triggered by messages in the logs topic.

- 3. Configure that Google Cloud Functions to drop logs that are not from Google Compute Engine and to insert Google Compute Engine logs in the platform-logs dataset.

- C.

- 1. In Google Cloud Logging, create a filter to view only Google Compute Engine logs.

- 2. Click Create Export.

- 3. Choose BigQuery as Sink Service, and the platform-logs dataset as Sink Destination.

- D.

- 1. Create a Google Cloud Functions that has the BigQuery User role on the platform-logs dataset.

- 2. Configure this Google Cloud Functions to create a BigQuery Job that executes this query: INSERT INTO dataset.platform-logs (timestamp, log) SELECT timestamp, log FROM compute.logs WHERE timestamp > DATE_SUB(CURRENT_DATE(), INTERVAL 1 DAY)

- 3. Use Google Cloud Scheduler to trigger this Google Cloud Functions once a day.

Correct Answer: C

Reference contents:

– Configure and manage sinks | Cloud Logging

QUESTION 19

You are using Google Cloud Deployment Manager to create a Google Kubernetes Engine cluster.

Using the same Google Cloud Deployment Manager deployment, you also want to create a DaemonSet in the kube-system namespace of the cluster. You want a solution that uses the fewest possible services.

What should you do?

- A. Add the clusters API as a new Type Provider in Google Cloud Deployment Manager, and use the new type to create the DaemonSet.

- B. Use the Google Cloud Deployment Manager Runtime Configurator to create a new Config resource that contains the DaemonSet definition.

- C. With Google Cloud Deployment Manager, create a Google Compute Engine instance with a startup script that uses kubectl to create the DaemonSet.

- D. In the clusters definition in Google Cloud Deployment Manager, add a metadata that has kube-system as key and the DaemonSet manifest as value.

Correct Answer: A

Reference contents:

– Adding an API as a type provider | Cloud Deployment Manager Documentation

– Cloud Deployment Manager & Kubernetes | by Daz Wilkin | Google Cloud – Community | Medium

QUESTION 20

You are building an application that will run in your data center.

The application will use Google Cloud Platform (GCP) services like Cloud AutoML. You created a service account that has appropriate access to Cloud AutoML. You need to enable authentication to the APIs from your on-premises environment.

What should you do?

- A. Use service account credentials in your on-premises application.

- B. Use gcloud to create a key file for the service account that has appropriate permissions.

- C. Set up direct interconnect between your data center and Google Cloud Platform to enable authentication for your on-premises applications.

- D. Go to the IAM & admin console, grant a user account permissions similar to the service account permissions, and use this user account for authentication from your data center.

Correct Answer: B

Reference contents:

– Before You Begin | AutoML Vision | Google Cloud

QUESTION 21

You are using Google Container Registry to centrally store your company’s container images in a separate project.

In another project, you want to create a Google Kubernetes Engine (GKE) cluster. You want to ensure that Kubernetes can download images from Google Container Registry.

What should you do?

- A. In the project where the images are stored, grant the Storage Object Viewer IAM role to the service account used by the Kubernetes nodes.

- B. When you create the GKE cluster, choose the Allow full access to all Cloud APIs option under Access scopes.

- C. Create a service account, and give it access to Google Cloud Storage. Create a P12 key for this service account and use it as an imagePullSecrets in Kubernetes.

- D. Configure the ACLs on each image in Google Cloud Storage to give read-only access to the default Google Compute Engine service account.

Correct Answer: A

Reference contents:

– #Permissions and roles – Access control with IAM | Container Registry documentation | Google Cloud

– #Predefined roles – IAM roles for Cloud Storage

QUESTION 22

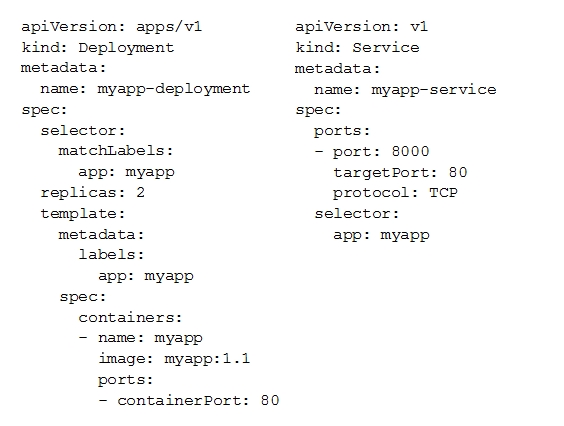

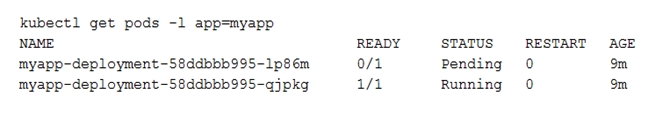

You deployed a new application inside your Google Kubernetes Engine cluster using the YAML file specified below.

You check the status of the deployed pods and notice that one of them is still in PENDING status:

You want to find out why the pod is stuck in pending status. What should you do?

- A. Review details of the myapp-service Service object and check for error messages.

- B. Review details of the myapp-deployment Deployment object and check for error messages.

- C. Review details of myapp-deployment-58ddbbb995-lp86m Pod and check for warning messages.

- D. View logs of the container in myapp-deployment-58ddbbb995-lp86m pod and check for warning messages.

Correct Answer: C

Reference contents:

– #Debugging Pods – Troubleshoot Applications | Kubernetes

QUESTION 23

You are setting up a Windows VM on Google Compute Engine and want to make sure you can log in to the VM via RDP.

What should you do?

- A. After the VM has been created, use your Google Account credentials to log in into the VM.

- B. After the VM has been created, use gcloud compute reset-windows-password to retrieve the login credentials for the VM.

- C. When creating the VM, add metadata to the instance using windows-password as the key and a password as the value.

- D. After the VM has been created, download the JSON private key for the default Google Compute Engine service account. Use the credentials in the JSON file to log in to the VM.

Correct Answer: B

Reference contents:

– gcloud beta compute reset-windows-password | Google Cloud CLI Documentation

– Generating credentials for Windows VMs | Compute Engine Documentation | Google Cloud

QUESTION 24

You want to configure an SSH connection to a single Google Compute Engine instance for users in the dev1 group.

This instance is the only resource in this particular Google Cloud Platform project that the dev1 users should be able to connect to.

What should you do?

- A. Set metadata to enable-oslogin=true for the instance. Grant the dev1 group the compute.osLogin role. Direct them to use the Google Cloud Shell to ssh to that instance.

- B. Set metadata to enable-oslogin=true for the instance. Set the service account to no service account for that instance. Direct them to use the Google Cloud Shell to ssh to that instance.

- C. Enable block project wide keys for the instance. Generate an SSH key for each user in the dev1 group. Distribute the keys to dev1 users and direct them to use their third-party tools to connect.

- D. Enable block project wide keys for the instance. Generate an SSH key and associate the key with that instance. Distribute the key to dev1 users and direct them to use their third-party tools to connect.

Correct Answer: A

Reference contents:

– #Granting OS Login IAM roles – Setting up OS Login | Compute Engine Documentation | Google Cloud

– Connecting to Linux VMs using advanced methods | Compute Engine Documentation | Google Cloud

QUESTION 25

You need to produce a list of the enabled Google Cloud Platform APIs for a GCP project using the gcloud command line in the Google Cloud Shell.

The project name is my-project.

What should you do?

- A. Run gcloud projects list to get the project ID, and then run gcloud services list –project <project ID>.

- B. Run gcloud init to set the current project to my-project, and then run gcloud services list –available.

- C. Run gcloud info to view the account value, and then run gcloud services list –account <Account>.

- D. Run gcloud projects describe <project ID> to verify the project value, and then run gcloud services list –available.

Correct Answer: A

Reference contents:

– #FLAGS – gcloud services list | Google Cloud CLI Documentation

QUESTION 26

You are building a new version of an application hosted in an Google App Engine environment.

You want to test the new version with 1% of users before you completely switch your application over to the new version.

What should you do?

- A. Deploy a new version of your application in Google Kubernetes Engine instead of Google App Engine and then use Google Cloud Console to split traffic.

- B. Deploy a new version of your application in a Google Compute Engine instance instead of Google App Engine and then use Google Cloud Console to split traffic.

- C. Deploy a new version as a separate app in Google App Engine. Then configure Google App Engine using Google Cloud Console to split traffic between the two apps.

- D. Deploy a new version of your application in Google App Engine. Then go to Google App Engine settings in Google Cloud Console and split traffic between the current version and newly deployed versions accordingly.

Correct Answer: D

Reference contents:

– App Engine Application Platform | Google Cloud

QUESTION 27

You need to provide a cost estimate for a Kubernetes cluster using the GCP pricing calculator for Kubernetes.

Your workload requires high IOPs, and you will also be using disk snapshots. You start by entering the number of nodes, average hours, and average days.

What should you do next?

- A. Fill in local SSD. Fill in persistent disk storage and snapshot storage.

- B. Fill in local SSD. Add estimated cost for cluster management.

- C. Select Add GPUs. Fill in persistent disk storage and snapshot storage.

- D. Select Add GPUs. Add estimated cost for cluster management.

Correct Answer: A

Reference contents:

– About local SSDs | Compute Engine Documentation | Google Cloud

QUESTION 28

You are using Google Kubernetes Engine with autoscaling enabled to host a new application.

You want to expose this new application to the public, using HTTPS on a public IP address.

What should you do?

- A. Create a Kubernetes Service of type NodePort for your application, and a Kubernetes Ingress to expose this Service via a Cloud Load Balancer.

- B. Create a Kubernetes Service of type ClusterIP for your application. Configure the public DNS name of your application using the IP of this Service.

- C. Create a Kubernetes Service of type NodePort to expose the application on port 443 of each node of the Kubernetes cluster. Configure the public DNS name of your application with the IP of every node of the cluster to achieve load-balancing.

- D. Create a HAProxy pod in the cluster to load-balance the traffic to all the pods of the application. Forward the public traffic to HAProxy with an iptable rule. Configure the DNS name of your application using the public IP of the node HAProxy is running on.

Correct Answer: A

Reference contents:

– Setting up HTTP(S) Load Balancing with Ingress | Kubernetes Engine | Google Cloud

– #Publishing Services (ServiceTypes) – Service | Kubernetes

QUESTION 29

You need to enable traffic between multiple groups of Google Compute Engine instances that are currently running two different GCP projects.

Each group of Google Compute Engine instances is running in its own VPC.

What should you do?

- A. Verify that both projects are in a GCP Organization. Create a new VPC and add all instances.

- B. Verify that both projects are in a GCP Organization. Share the VPC from one project and request that the Google Compute Engine instances in the other project use this shared VPC.

- C. Verify that you are the Project Administrator of both projects. Create two new VPCs and add all instances.

- D. Verify that you are the Project Administrator of both projects. Create a new VPC and add all instances.

Correct Answer: B

Reference contents:

– Shared VPC overview | Google Cloud

QUESTION 30

You want to add a new auditor to a Google Cloud Platform project.

The auditor should be allowed to read, but not modify, all project items.

How should you configure the auditor’s permissions?

- A. Create a custom role with view-only project permissions. Add the user’s account to the custom role.

- B. Create a custom role with view-only service permissions. Add the user’s account to the custom role.

- C. Select the built-in IAM project Viewer role. Add the user’s account to this role.

- D. Select the built-in IAM service Viewer role. Add the user’s account to this role.

Correct Answer: C

Reference contents:

– Access control for projects with IAM | Resource Manager Documentation | Google Cloud

QUESTION 31

You are operating a Google Kubernetes Engine (GKE) cluster for your company where different teams can run non-production workloads.

Your Machine Learning (ML) team needs access to Nvidia Tesla P100 GPUs to train their models. You want to minimize effort and cost.

What should you do?

- A. Ask your ML team to add the accelerator: gpu annotation to their pod specification.

- B. Recreate all the nodes of the GKE cluster to enable GPUs on all of them.

- C. Create your own Kubernetes cluster on top of Google Compute Engine with nodes that have GPUs. Dedicate this cluster to your ML team.

- D. Add a new, GPU-enabled, node pool to the GKE cluster. Ask your ML team to add the cloud.google.com/gke -accelerator: nvidia-tesla-p100 nodeSelector to their pod specification.

Correct Answer: D

Reference contents:

– Running GPUs | Kubernetes Engine Documentation | Google Cloud

QUESTION 32

Your VMs are running in a subnet that has a subnet mask of 255.255.255.240.

The current subnet has no more free IP addresses and you require an additional 10 IP addresses for new VMs. The existing and new VMs should all be able to reach each other without additional routes.

What should you do?

- A. Use gcloud to expand the IP range of the current subnet.

- B. Delete the subnet, and recreate it using a wider range of IP addresses.

- C. Create a new project. Use Shared VPC to share the current network with the new project.

- D. Create a new subnet with the same starting IP but a wider range to overwrite the current subnet.

Correct Answer: A

Reference contents:

– gcloud compute networks subnets expand-ip-range | Google Cloud CLI Documentation

QUESTION 33

Your organization uses G Suite for communication and collaboration.

All users in your organization have a G Suite account. You want to grant some G Suite users access to your Google Cloud Platform project.

What should you do?

- A. Enable Cloud Identity in the Google Cloud Console for your domain.

- B. Grant them the required IAM roles using their G Suite email address.

- C. Create a CSV sheet with all users email addresses. Use the gcloud command line tool to convert them into Google Cloud Platform accounts.

- D. In the G Suite console, add the users to a special group called cloud-console-users@yourdomain.com. Rely on the default behavior of the Google Cloud Platform to grant users access if they are members of this group.

Correct Answer: B

Reference contents:

– Creating and managing organizations | Resource Manager Documentation | Google Cloud

QUESTION 34

You have a Google Cloud Platform account with access to both production and development projects.

You need to create an automated process to list all compute instances in development and production projects on a daily basis.

What should you do?

- A. Create two configurations using gcloud config. Write a script that sets configurations as active, individually. For each configuration, use gcloud compute instances list to get a list of compute resources.

- B. Create two configurations using gsutil config. Write a script that sets configurations as active, individually. For each configuration, use gsutil compute instances list to get a list of compute resources.

- C. Go to Google Cloud Shell and export this information to Google Cloud Storage on a daily basis.

- D. Go to Google Cloud Console and export this information to Google Cloud SQL on a daily basis.

Correct Answer: A

Reference contents:

– gcloud compute instances list | Google Cloud CLI Documentation

QUESTION 35

You have a large 5-TB AVRO file stored in a Google Cloud Storage bucket.

Your analysts are proficient only in SQL and need access to the data stored in this file. You want to find a cost-effective way to complete their request as soon as possible.

What should you do?

- A. Load data in Google Cloud Datastore and run a SQL query against it.

- B. Create a BigQuery table and load data in BigQuery. Run a SQL query on this table and drop this table after you complete your request.

- C. Create external tables in BigQuery that point to Google Cloud Storage buckets and run a SQL query on these external tables to complete your request.

- D. Create a Hadoop cluster and copy the AVRO file to NDFS by compressing it. Load the file in a hive table and provide access to your analysts so that they can run SQL queries.

Correct Answer: C

Reference contents:

– Introduction to external data sources | BigQuery | Google Cloud

– Querying Cloud Storage data | BigQuery

– #Avro schemas – Loading Avro data from Cloud Storage | BigQuery

QUESTION 36

You need to verify that a Google Cloud Platform service account was created at a particular time.

What should you do?

- A. Filter the Activity log to view the Configuration category. Filter the Resource type to Service Account.

- B. Filter the Activity log to view the Configuration category. Filter the Resource type to Google Project.

- C. Filter the Activity log to view the Data Access category. Filter the Resource type to Service Account.

- D. Filter the Activity log to view the Data Access category. Filter the Resource type to Google Project.

Correct Answer: A

QUESTION 37

You deployed an LDAP server on Google Compute Engine that is reachable via TLS through port 636 using UDP.

You want to make sure it is reachable by clients over that port.

What should you do?

- A. Add the network tag allow-udp-636 to the VM instance running the LDAP server.

- B. Create a route called allow-udp-636 and set the next hop to be the VM instance running the LDAP server.

- C. Add a network tag of your choice to the instance. Create a firewall rule to allow ingress on UDP port 636 for that network tag.

- D. Add a network tag of your choice to the instance running the LDAP server. Create a firewall rule to allow egress on UDP port 636 for that network tag.

Correct Answer: C

QUESTION 38

You need to set a budget alert for use of Google Compute Engineer services on one of the three Google Cloud Platform projects that you manage.

All three projects are linked to a single billing account.

What should you do?

- A. Verify that you are the project billing administrator. Select the associated billing account and create a budget and alert for the appropriate project.

- B. Verify that you are the project billing administrator. Select the associated billing account and create a budget and a custom alert.

- C. Verify that you are the project administrator. Select the associated billing account and create a budget for the appropriate project.

- D. Verify that you are project administrator. Select the associated billing account and create a budget and a custom alert.

Correct Answer: A

Reference contents:

– #Billing roles – Understanding roles | IAM Documentation | Google Cloud

QUESTION 39

You are migrating a production-critical on-premises application that requires 96 vCPUs to perform its task.

You want to make sure the application runs in a similar environment on GCP.

What should you do?

- A. When creating the VM, use machine type n1-standard-96.

- B. When creating the VM, use Intel Skylake as the CPU platform.

- C. Create the VM using Google Compute Engine default settings. Use gcloud to modify the running instance to have 96 vCPUs.

- D. Start the VM using Google Compute Engine default settings, and adjust as you go based on Rightsizing Recommendations.

Correct Answer: A

QUESTION 40

You want to configure a solution for archiving data in a Google Cloud Storage bucket.

The solution must be cost-effective. Data with multiple versions should be archived after 30 days. Previous versions are accessed once a month for reporting. This archive data is also occasionally updated at month-end.

What should you do?

- A. Add a bucket lifecycle rule that archives data with newer versions after 30 days to Coldline Storage.

- B. Add a bucket lifecycle rule that archives data with newer versions after 30 days to Nearline Storage.

- C. Add a bucket lifecycle rule that archives data from regional storage after 30 days to Coldline Storage.

- D. Add a bucket lifecycle rule that archives data from regional storage after 30 days to Nearline Storage.

Correct Answer: B

Reference contents:

– Manage object lifecycles | Cloud Storage

– #NumberOfNewerVersions – Object Lifecycle Management | Cloud Storage

QUESTION 41

Your company’s infrastructure is on-premises, but all machines are running at maximum capacity.

You want to burst to Google Cloud. The workloads on Google Cloud must be able to directly communicate to the workloads on-premises using a private IP range.

What should you do?

- A. In Google Cloud, configure the VPC as a host for Shared VPC.

- B. In Google Cloud, configure the VPC for VPC Network Peering.

- C. Create bastion hosts both in your on-premises environment and on Google Cloud. Configure both as proxy servers using their public IP addresses.

- D. Set up Cloud VPN between the infrastructure on-premises and Google Cloud.

Correct Answer: D

Reference contents:

– Cloud Hybrid Connectivity Faster Networking | Google Cloud

QUESTION 42

You want to select and configure a solution for storing and archiving data on Google Cloud Platform.

You need to support compliance objectives for data from one geographic location. This data is archived after 30 days and needs to be accessed annually.

What should you do?

- A. Select Multi-Regional Storage. Add a bucket lifecycle rule that archives data after 30 days to Coldline Storage.

- B. Select Multi-Regional Storage. Add a bucket lifecycle rule that archives data after 30 days to Nearline Storage.

- C. Select Regional Storage. Add a bucket lifecycle rule that archives data after 30 days to Nearline Storage.

- D. Select Regional Storage. Add a bucket lifecycle rule that archives data after 30 days to Coldline Storage.

Correct Answer: D

Reference contents:

– Storage classes | Google Cloud

QUESTION 43

Your company uses BigQuery for data warehousing.

Over time, many different business units in your company have created 1000+ datasets across hundreds of projects. Your CIO wants you to examine all datasets to find tables that contain an employee_ssn column. You want to minimize effort in performing this task.

What should you do?

- A. Go to Data Catalog and search for employee_ssn in the search box.

- B. Write a shell script that uses the bq command line tool to loop through all the projects in your organization.

- C. Write a script that loops through all the projects in your organization and runs a query on INFORMATION_SCHEMA.COLUMNS view to find the employee_ssn column.

- D. Write a Google Cloud Dataflow job that loops through all the projects in your organization and runs a query on INFORMATION_SCHEMA.COLUMNS view to find employee_ssn column.

Correct Answer: A

QUESTION 44

You create a Deployment with 2 replicas in a Google Kubernetes Engine cluster that has a single preemptible node pool.

After a few minutes, you use kubectl to examine the status of your Pod and observe that one of them is still in Pending status:

What is the most likely cause?

- A. The pending Pod’s resource requests are too large to fit on a single node of the cluster.

- B. Too many Pods are already running in the cluster, and there are not enough resources left to schedule the pending Pod.

- C. The node pool is configured with a service account that does not have permission to pull the container image used by the pending Pod.

- D. The pending Pod was originally scheduled on a node that has been preempted between the creation of the Deployment and your verification of the Pods status. It is currently being rescheduled on a new node.

Correct Answer: B

Reference contents:

– Kubernetes Troubleshooting Walkthrough – Pending Pods | ManagedKube

– #My pod stays pending – Troubleshoot Applications | Kubernetes

QUESTION 45

You want to find out when users were added to Google Cloud Spanner Identity Access Management (IAM) roles on your Google Cloud Platform (GCP) project.

What should you do in the Google Cloud Console?

- A. Open the Google Cloud Spanner console to review configurations.

- B. Open the IAM & admin console to review IAM policies for Google Cloud Spanner roles.

- C. Go to the Stackdriver Monitoring console and review information for Google Cloud Spanner.

- D. Go to the Stackdriver Logging console, review admin activity logs, and filter them for Google Cloud Spanner IAM roles.

Correct Answer: D

Reference contents:

– #Admin Activity audit logs – Cloud Audit Logs overview

QUESTION 46

Your company implemented BigQuery as an enterprise data warehouse.

Users from multiple business units run queries on this data warehouse. However, you notice that query costs for BigQuery are very high, and you need to control costs.

Which two methods should you use? (Choose two.)

- A. Split the users from business units to multiple projects.

- B. Apply a user- or project-level custom query quota for BigQuery data warehouse.

- C. Create separate copies of your BigQuery data warehouse for each business unit.

- D. Split your BigQuery data warehouse into multiple data warehouses for each business unit.

- E. Change your BigQuery query model from on-demand to flat rate. Apply the appropriate number of slots to each Project.

Correct Answer: B, E

Reference contents:

– Create custom cost controls | BigQuery | Google Cloud

– Pricing | BigQuery: Cloud Data Warehouse

QUESTION 47

You are building a product on top of Google Kubernetes Engine (GKE).

You have a single GKE cluster. For each of your customers, a Pod is running in that cluster, and your customers can run arbitrary code inside their Pod. You want to maximize the isolation between your customers’ Pods.

What should you do?

- A. Use Binary Authorization and whitelist only the container images used by your customers Pods.

- B. Use the Container Analysis API to detect vulnerabilities in the containers used by your customers Pods.

- C. Create a GKE node pool with a sandbox type configured to gvisor. Add the parameter runtimeClassName: gvisor to the specification of your customers Pods.

- D. Use the cos_containerd image for your GKE nodes. Add a nodeSelector with the value cloud.google.com/gke-os-distribution: cos_containerd to the specification of your customers Pods.

Correct Answer: C

Reference contents:

– Google Kubernetes Engine (GKE)

– GKE Sandbox | Kubernetes Engine Documentation | Google Cloud

QUESTION 48

Your customer has implemented a solution that uses Google Cloud Spanner and notices some read latency-related performance issues on one table.

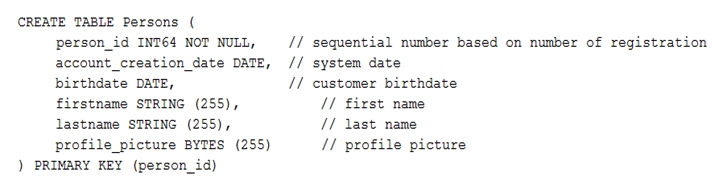

This table is accessed only by their users using a primary key. The table schema is shown below.

You want to resolve the issue.

What should you do?

- A. Remove the profile_picture field from the table.

- B. Add a secondary index on the person_id column.

- C. Change the primary key to not have monotonically increasing values.

- D. Create a secondary index using the following Data Definition Language (DDL):

CREATE INDEX person_id_ix

ON Persons (

person_id,

firstname,

lastname

) STORING (

profile_picture

)

Correct Answer: C

Reference contents:

– Schema design best practices | Cloud Spanner

– Cloud Spanner — Choosing the right primary keys | by Robert Kubis | Google Cloud – Community | Medium

QUESTION 49

Your finance team wants to view the billing report for your projects.

You want to make sure that the finance team does not get additional permissions to the project.

What should you do?

- A. Add the group for the finance team to roles/billing user role.

- B. Add the group for the finance team to roles/billing admin role.

- C. Add the group for the finance team to roles/billing viewer role.

- D. Add the group for the finance team to roles/billing project/Manager role.

Correct Answer: C

Reference contents:

– Overview of Cloud Billing access control

QUESTION 50

Your organization has strict requirements to control access to Google Cloud projects.

You need to enable your Site Reliability Engineers (SREs) to approve requests from the Google Cloud support team when an SRE opens a support case. You want to follow Google-recommended practices.

What should you do?

- A. Add your SREs to roles/iam.roleAdmin role.

- B. Add your SREs to roles/accessapproval.approver role.

- C. Add your SREs to a group and then add this group to roles/iam.roleAdmin.role.

- D. Add your SREs to a group and then add this group to roles/accessapproval.approver role.

Correct Answer: D

![[GCP] Google Cloud Certified - Associate Cloud Engineer](https://www.cloudsmog.net/wp-content/uploads/google-cloud-certified_associate-cloud-engineer-1200x675.jpg)

Comments are closed